Edge AI has moved from experimental pilots to mission-critical deployments in manufacturing, healthcare, retail, transportation, and smart infrastructure. As organizations push intelligence closer to where data is generated, they need reliable platforms that simplify model deployment, monitoring, and lifecycle management on resource-constrained devices. Choosing the right Edge AI deployment platform can significantly reduce integration complexity, improve performance, and strengthen security across distributed systems.

TLDR: Edge AI platforms enable organizations to deploy and manage machine learning models directly on devices such as cameras, sensors, gateways, and industrial controllers. The leading solutions combine model optimization, remote orchestration, security, and device management in a scalable framework. This article outlines six serious, production-ready Edge AI deployment platforms that help teams move from prototype to real-world implementation with confidence.

1. AWS IoT Greengrass

AWS IoT Greengrass extends Amazon Web Services to edge devices, enabling them to act locally on data while still leveraging cloud capabilities for management and training. It is particularly suited for organizations already operating within the AWS ecosystem.

Key capabilities:

- Local inference: Run AWS Lambda functions and machine learning models directly on edge devices without round-trip cloud latency.

- Offline functionality: Continue operating even when disconnected from the internet.

- Cloud synchronization: Seamless data synchronization once connectivity is restored.

- Security: Built-in authentication and encryption using AWS security primitives.

Greengrass supports frameworks such as TensorFlow and PyTorch through Amazon SageMaker integration, allowing teams to train in the cloud and deploy optimized artifacts to devices. For enterprise use cases such as predictive maintenance or visual anomaly detection in industrial facilities, this continuity between training and deployment can significantly reduce operational friction.

However, it is best suited for organizations committed to AWS infrastructure, as deep integration with the broader AWS environment is one of its strongest advantages.

2. Microsoft Azure IoT Edge

Azure IoT Edge is Microsoft’s edge deployment framework that enables containerized AI workloads to run on edge devices. It leverages familiar DevOps and container orchestration tools, making it particularly attractive for enterprises standardized on Microsoft technologies.

Core strengths:

- Container-based deployment: Uses Docker containers for flexible packaging and portability.

- Azure Machine Learning integration: Streamlined deployment of trained models to edge hardware.

- Centralized management: Monitor thousands of devices through Azure IoT Hub.

- Strong enterprise compliance: Enterprise-grade identity management and security policies.

One notable advantage is the modular architecture. Developers can deploy multiple independent modules, including AI inference engines, custom code, and third-party services, onto a single device. This modularity supports complex workflows, such as video analytics pipelines or industrial automation systems.

For industries with strict compliance requirements—such as healthcare or energy—Azure IoT Edge’s integration with Microsoft’s security and identity stack can provide an additional layer of operational confidence.

3. NVIDIA Jetson Platform

For performance-intensive Edge AI applications—especially computer vision and robotics—the NVIDIA Jetson platform stands out. Unlike purely software-based orchestration tools, Jetson offers a combination of specialized hardware and software tailored for AI inference at the edge.

Why organizations choose Jetson:

- GPU acceleration: High-performance inference for real-time vision and deep learning tasks.

- CUDA and TensorRT optimization: Accelerate and compress models for efficient device execution.

- Support for major frameworks: TensorFlow, PyTorch, ONNX, and others.

- Scalability across device classes: From compact modules to more powerful edge servers.

Jetson is widely adopted in autonomous machines, smart surveillance, medical imaging devices, and retail analytics systems. Developers can optimize models using NVIDIA TensorRT to achieve lower latency and reduced power consumption without sacrificing too much accuracy.

While Jetson requires investment in NVIDIA-specific hardware, the tight hardware-software integration often results in superior real-time performance compared to purely general-purpose edge deployments.

4. Google Distributed Cloud Edge (Anthos at the Edge)

Google’s edge strategy builds on Anthos, its hybrid and multi-cloud application platform. Google Distributed Cloud Edge extends Kubernetes-based workload orchestration to edge devices, allowing AI services to be managed consistently across cloud and field deployments.

Main advantages:

- Kubernetes-native approach: Consistent deployment model from cloud to edge.

- Centralized control plane: Manage clusters and workloads remotely.

- AI and ML integration: Connect to Vertex AI for training and lifecycle management.

- Carrier-grade support: Designed for telecom and 5G edge use cases.

This platform is well suited for large-scale distributed systems, such as telecommunications infrastructure or geographically dispersed retail locations. By standardizing on containers and Kubernetes, organizations can reduce environment drift and maintain predictable deployment processes.

Its primary strength lies in architectural consistency—particularly valuable for companies pursuing hybrid strategies that span data centers, public cloud, and far-edge environments.

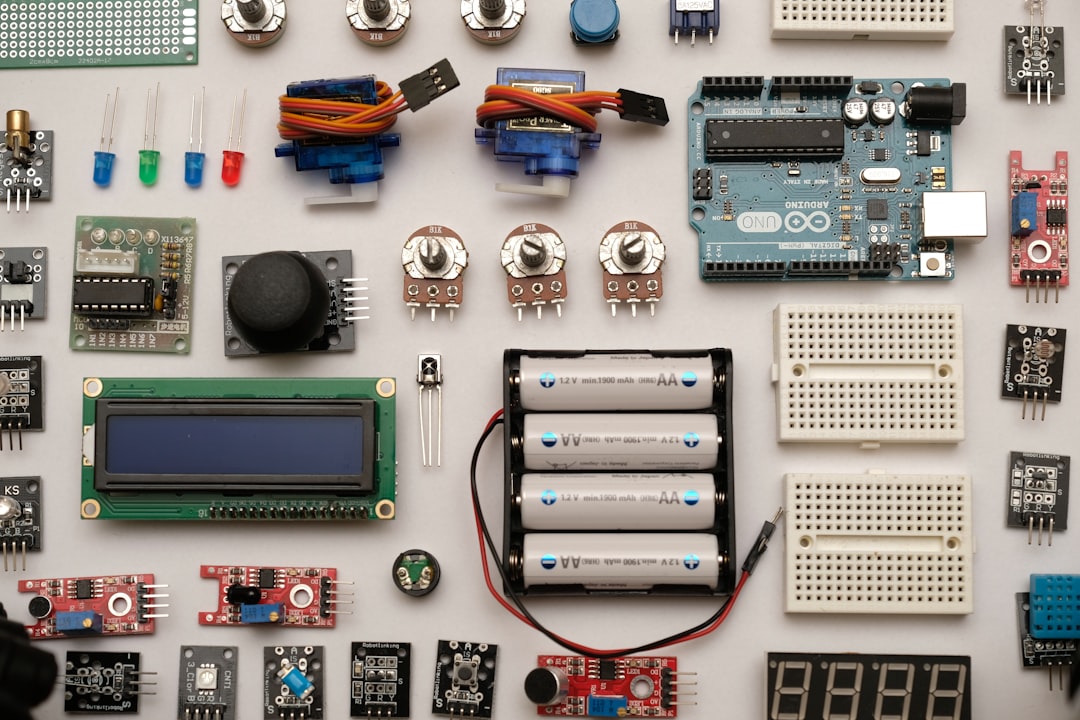

5. Edge Impulse

Edge Impulse is a specialized Edge AI development and deployment platform designed to simplify model creation and deployment for embedded systems. It is particularly popular among engineering teams building AI-powered IoT products.

Key features:

- End-to-end workflow: Data collection, labeling, training, optimization, and deployment in one environment.

- TinyML optimization: Efficient deployment on microcontrollers with limited memory.

- Auto-generated deployment packages: C++ libraries and firmware-ready builds.

- Broad hardware support: Compatible with multiple microcontroller vendors.

Edge Impulse excels when computational resources are extremely constrained. For example, if you are building a battery-powered sensor that performs on-device audio classification or vibration anomaly detection, this platform simplifies the optimization process.

Rather than focusing on large-scale fleet orchestration, Edge Impulse prioritizes streamlined model delivery to embedded systems. As such, it is ideal for product-focused teams rather than cloud-centric IT departments.

6. IBM Edge Application Manager

IBM Edge Application Manager is built for large enterprises that need autonomous management of tens of thousands of edge nodes. It focuses on policy-driven automation and secure workload distribution.

Distinguishing capabilities:

- Autonomous deployment: Automatically deploy workloads based on node policies.

- Massive scalability: Designed to manage fleets exceeding 10,000 devices.

- Open Horizon foundation: Built on open-source edge computing principles.

- Enterprise security controls: Strong encryption and identity verification mechanisms.

The platform is particularly well suited for industries with dispersed infrastructure, such as oil and gas, logistics, and global retail chains. By using declarative policies, organizations can ensure that only relevant workloads are deployed to specific classes of devices.

This reduces manual configuration overhead and ensures consistency across widely distributed environments.

How to Choose the Right Edge AI Deployment Platform

Selecting a deployment platform should be based on technical requirements, organizational maturity, and scalability goals. Consider the following evaluation criteria:

- Hardware constraints: Does the platform support GPUs, CPUs, or microcontrollers required for your use case?

- Model optimization tools: Are quantization and acceleration features built in?

- Fleet management: Can you monitor and update thousands of devices remotely?

- Security architecture: Are encryption, key management, and identity controls robust?

- Integration ecosystem: Does it integrate smoothly with your training pipelines and DevOps workflows?

Organizations deploying AI at massive scale often prioritize orchestration and lifecycle management. In contrast, product teams designing smart devices may prioritize efficient embedded inference and minimal power consumption.

Final Thoughts

Edge AI is no longer optional for organizations seeking low latency, privacy protection, and operational continuity. Whether deploying computer vision on manufacturing lines, predictive maintenance in energy infrastructure, or intelligent monitoring in healthcare devices, choosing a reliable deployment platform is essential.

AWS IoT Greengrass and Azure IoT Edge offer powerful cloud-integrated solutions. NVIDIA Jetson delivers high-performance hardware acceleration for demanding inference workloads. Google Distributed Cloud Edge provides consistent Kubernetes-based orchestration. Edge Impulse simplifies TinyML and embedded deployment, while IBM Edge Application Manager enables policy-driven automation at enterprise scale.

Each platform addresses a different segment of the Edge AI spectrum. A careful assessment of performance requirements, regulatory constraints, and growth projections will help ensure your Edge AI initiative is not only technically sound but operationally sustainable for years to come.