As artificial intelligence and machine learning systems grow more advanced, the need for efficient data storage and retrieval has become increasingly critical. Traditional databases are well suited for structured data and exact-match queries, but they often struggle with the high-dimensional, semantic data used in modern AI applications. Vector databases have emerged as a powerful solution, enabling faster similarity search, semantic understanding, and scalable machine learning deployment across industries.

TLDR: Vector databases store and index high-dimensional vector embeddings generated by machine learning models. They enable fast similarity search, semantic retrieval, and real-time AI-driven applications such as recommendation engines, chatbots, and image recognition systems. By optimizing for nearest neighbor search and scalability, vector databases bridge the gap between massive datasets and intelligent systems. Organizations using AI increasingly rely on vector databases to power reliable, context-aware experiences.

Understanding Vector Databases

A vector database is specifically designed to store, manage, and query vector embeddings—numerical representations of data such as text, images, audio, or video. These embeddings are typically generated by machine learning models like transformers, convolutional neural networks, or large language models.

Instead of storing data as rows and columns in traditional relational formats, vector databases store multi-dimensional arrays of numbers that encode semantic meaning. For example:

- A sentence can be converted into a 768-dimensional vector.

- An image can become a 2048-dimensional feature vector.

- An audio clip can be represented as a compressed embedding of its acoustic features.

These vectors allow systems to perform similarity search, meaning they can retrieve items that are conceptually similar rather than merely identical in text or structure.

Why Traditional Databases Fall Short

Traditional databases excel at structured queries such as:

- Find all users named John.

- Retrieve transactions over $1,000.

However, AI-driven applications require a different type of query:

- Find documents similar in meaning to this paragraph.

- Recommend products visually similar to this image.

- Identify songs that match this acoustic pattern.

These tasks demand approximate nearest neighbor (ANN) search in high-dimensional spaces. As dimensionality increases, traditional indexing methods become inefficient—a challenge often referred to as the curse of dimensionality.

Vector databases address this by implementing specialized indexing structures such as:

- HNSW (Hierarchical Navigable Small World graphs)

- IVF (Inverted File Index)

- Product Quantization

These algorithms dramatically reduce retrieval time while maintaining high accuracy.

How Vector Databases Power AI Applications

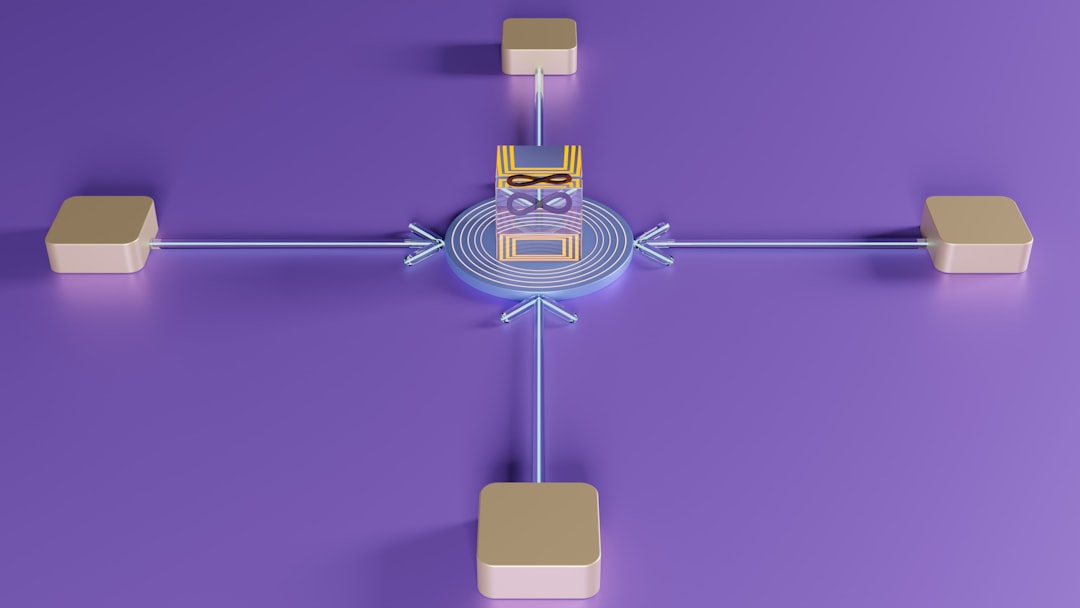

Vector databases play a foundational role in modern AI systems. They act as the memory layer that enables intelligent applications to retrieve context, compare similarities, and make informed predictions.

1. Semantic Search

Search engines powered by vectors understand intent and context, rather than relying solely on keyword matching. When a user searches for “affordable eco-friendly footwear,” a vector database can return results semantically aligned with sustainability and price sensitivity, even if those exact terms are not present.

2. Recommendation Systems

Streaming platforms, e-commerce stores, and content providers use vector embeddings to model user preferences and item characteristics. By comparing vectors, the system can recommend items that are most similar to a user’s historical interactions.

- Product recommendations

- Content personalization

- Friend or connection suggestions

3. Large Language Model (LLM) Retrieval

Retrieval-augmented generation (RAG) frameworks depend heavily on vector databases. When a user asks a question, the system:

- Converts the query into an embedding.

- Compares it against stored document embeddings.

- Retrieves the most relevant results.

- Feeds them into a language model for context-aware responses.

This significantly improves factual accuracy and domain relevance.

4. Image and Video Recognition

Computer vision systems generate embeddings from visual inputs. Vector databases allow:

- Facial recognition match comparisons

- Duplicate image detection

- Visual product search

These searches are completed in milliseconds, even across millions or billions of vectors.

Core Components of a Vector Database

While implementations vary, most vector databases include the following components:

- Embedding Storage: Efficient storage of high-dimensional vectors.

- Indexing Engine: Optimized search structures for rapid similarity comparison.

- Query Processing Layer: Handles similarity metrics like cosine similarity or Euclidean distance.

- Scalability Mechanisms: Distributed processing and sharding support.

- Filtering Capabilities: Combines metadata filtering with vector search.

The hybrid search capability—combining structured filtering with similarity search—is especially valuable. For example, an application might retrieve the most semantically similar items within a certain price range or location.

Benefits of Vector Databases for Organizations

Companies adopting AI solutions gain measurable benefits from vector databases:

Performance at Scale

Modern vector databases can manage billions of embeddings while maintaining low-latency search results. This is critical for real-time applications.

Improved User Experience

Semantic understanding leads to more relevant search results, personalized recommendations, and intelligent responses.

Reduced Model Hallucination

In LLM applications, vector-based retrieval grounds outputs in verified data sources, improving accuracy.

Flexible Data Handling

Vector databases can work alongside existing relational or NoSQL databases, acting as a complementary AI layer rather than a replacement.

Popular Use Cases Across Industries

Vector databases are transforming operations in various sectors:

- Healthcare: Matching patient records, analyzing medical imaging, drug discovery research.

- Finance: Fraud detection using behavioral similarity patterns.

- Retail: Visual search and personalized marketing.

- Media: Content tagging, recommendation engines, semantic indexing.

- Cybersecurity: Anomaly detection through pattern embeddings.

Key Distance Metrics in Vector Search

The effectiveness of vector search depends on the chosen similarity measurement. Common metrics include:

- Cosine Similarity: Measures orientation rather than magnitude.

- Euclidean Distance: Calculates straight-line distance between vectors.

- Dot Product: Often used in recommendation systems.

The choice depends on the embedding model and application goals. For textual embeddings, cosine similarity is widely used due to its effectiveness in measuring semantic closeness.

Challenges and Considerations

Despite their advantages, vector databases introduce unique challenges:

- High Memory Usage: Storing millions of high-dimensional vectors requires efficient compression techniques.

- Latency Sensitivity: Real-time applications demand optimized indexing strategies.

- Embedding Quality: Poor model training leads to weak search performance.

- Data Governance: Sensitive embeddings still require compliance protection.

Organizations must ensure that embedding pipelines, indexing configurations, and update strategies are properly managed.

The Future of Vector Databases

As AI becomes more integrated into enterprise systems, vector databases are expected to evolve in several key ways:

- Tighter LLM Integration: Seamless support for retrieval-augmented generation pipelines.

- Hybrid Architectures: Native integration with relational and graph databases.

- Edge Deployment: Lightweight vector search at device level.

- Improved Compression: Techniques like quantization to reduce storage demands.

The demand for intelligent systems capable of reasoning over large datasets will continue to increase, positioning vector databases as a core infrastructure component for AI-driven enterprises.

Conclusion

Vector databases have become a foundational technology in enabling modern AI and machine learning applications. By supporting high-dimensional embeddings and efficient similarity search, they unlock the ability to build semantic search engines, intelligent assistants, recommendation systems, and advanced computer vision tools. Unlike traditional databases, they are built specifically for the mathematical and computational challenges of AI workloads.

As organizations increasingly rely on embedding-based architectures and large language models, vector databases serve as the bridge between raw data and meaningful intelligence. Their role in scalable, context-aware systems makes them indispensable in the evolving AI ecosystem.

Frequently Asked Questions (FAQ)

-

1. What is a vector database in simple terms?

A vector database stores numerical representations of data (embeddings) and allows fast similarity-based searches instead of exact keyword matches. -

2. How is a vector database different from a traditional database?

Traditional databases handle structured data and exact queries, while vector databases are optimized for high-dimensional similarity searches used in AI applications. -

3. What are embeddings?

Embeddings are numerical vector representations generated by machine learning models that capture semantic meaning from text, images, or other data types. -

4. Why are vector databases important for large language models?

They enable retrieval of relevant contextual information, improving accuracy and reducing hallucinations in AI-generated responses. -

5. Can vector databases scale to billions of records?

Yes. Many modern vector databases use distributed architectures and optimized indexing algorithms to scale efficiently. -

6. What industries benefit most from vector databases?

Industries such as healthcare, finance, retail, cybersecurity, and media benefit significantly from vector-powered AI applications. -

7. Are vector databases secure?

Security depends on implementation, but enterprise-ready solutions typically include encryption, access control, and compliance support.